# Visual Reasoning

Chinese Picks

QVQ Max

QVQ-Max is a visual reasoning model launched by the Qwen team, capable of understanding and analyzing image and video content to provide solutions. It is not limited to text input but can also handle complex visual information. Suitable for users who need multi-modal information processing, such as in education, work, and life scenarios. This product is developed based on deep learning and computer vision technology and is suitable for students, professionals, and creative individuals. This is the initial release, and subsequent optimizations will be continuous.

AI Model

52.2K

Aya Vision 32B

Aya Vision 32B is an advanced vision-language model developed by Cohere For AI, boasting 32 billion parameters and supporting 23 languages, including English, Chinese, and Arabic. This model combines the latest multilingual language model Aya Expanse 32B and the SigLIP2 vision encoder, achieving visual and language understanding integration through a multimodal adapter. It excels in the vision-language field, capable of handling complex image and text tasks such as OCR, image captioning, and visual reasoning. The release of this model aims to promote the popularization of multimodal research, providing a powerful tool for global researchers with its open-source weights. The model is licensed under CC-BY-NC and is subject to Cohere For AI's fair use policy.

AI Model

67.3K

Alphamaze V0.2 1.5B

AlphaMaze is a project focused on enhancing the visual reasoning abilities of Large Language Models (LLMs). It trains models through maze tasks described in text format, enabling them to understand and plan in spatial structures. This method avoids complex image processing and directly assesses the model's spatial understanding through text descriptions. Its main advantage is the ability to reveal how the model thinks about spatial problems, rather than simply whether it can solve them. The model is based on open-source frameworks and aims to promote research and development of language models in the field of visual reasoning.

AI Model

50.5K

Alphamaze

AlphaMaze is a decoder language model designed specifically for solving visual reasoning tasks. It demonstrates the potential of language models in visual reasoning through training on maze-solving tasks. The model is built upon the 1.5 billion parameter Qwen model and is trained with Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL). Its main advantage lies in its ability to transform visual tasks into text format for reasoning, thereby compensating for the lack of spatial understanding in traditional language models. The development background of this model is to improve AI performance in visual tasks, especially in scenarios requiring step-by-step reasoning. Currently, AlphaMaze is a research project, and its commercial pricing and market positioning have not yet been clearly defined.

AI Model

46.9K

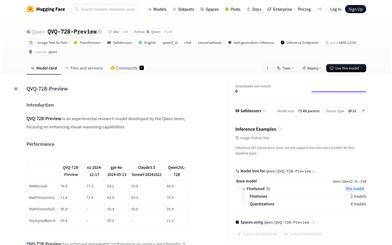

QVQ 72B Preview

QVQ-72B-Preview is an experimental research model developed by the Qwen team, focusing on enhancing visual reasoning capabilities. The model demonstrates strong abilities in multidisciplinary understanding and reasoning, achieving significant advances especially in mathematical reasoning tasks. Although advancements have been made in visual reasoning, it does not completely replace the capabilities of Qwen2-VL-72B, and may gradually lose focus on image content in multi-step visual reasoning, leading to hallucinations. Furthermore, QVQ does not show significantly better performance in basic recognition tasks compared to Qwen2-VL-72B.

AI Model

59.6K

Claude 3.5 Sonnet

Claude 3.5 Sonnet, developed by Anthropic, strikes a remarkable balance between intelligence, speed, and cost. This model sets new industry benchmarks in graduate-level reasoning, undergraduate-level knowledge, and programming proficiency. It excels at understanding nuances, humor, and complex instructions, and can generate high-quality content in a natural and friendly tone. Additionally, it demonstrates strong capabilities in visual reasoning, chart interpretation, and image-to-text transcription, making it an ideal choice for industries like retail, logistics, and financial services.

AI Model

122.3K

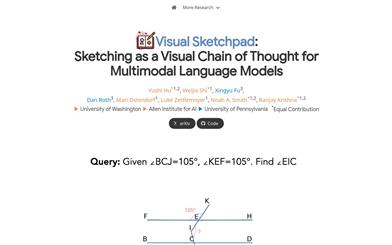

Visual Sketchpad

Visual Sketchpad is a framework that provides a visual sketchpad and drawing tools for multimodal large language models (LLMs). It allows models to operate on visually created elements while planning and reasoning, unlike previous methods that relied solely on text for reasoning steps. Visual Sketchpad enables models to draw using lines, boxes, annotations, and other more human-like drawing elements, thereby facilitating better reasoning. Additionally, it can incorporate expert vision models, such as object detection models for drawing bounding boxes or segmentation models for drawing masks, to further enhance visual perception and reasoning capabilities.

AI Model

53.0K

Fresh Picks

Cantor

Cantor is a multimodal chain-of-thought (CoT) framework that leverages a perception-decision architecture to combine visual context acquisition with logical reasoning, effectively solving complex visual reasoning tasks. Acting as a decision generator, Cantor integrates visual input to analyze images and questions, ensuring tighter alignment with real-world scenarios. Furthermore, Cantor utilizes the advanced cognitive capabilities of large language models (LLMs) as multi-faceted experts to deduce higher-level information, enriching the CoT generation process. Extensive experiments on two challenging visual reasoning datasets demonstrate the effectiveness of the proposed framework. Notably, Cantor achieves significant improvements in multimodal CoT performance without requiring fine-tuning or real-world reasoning, surpassing existing baselines."

AI Model

51.3K

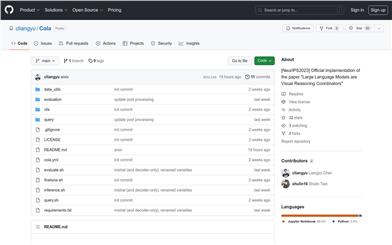

Cola

Cola is a method that uses a language model (LM) to aggregate the outputs of 2 or more vision-language models (VLMs). Our model assembly method is called Cola (COordinative LAnguage model or visual reasoning). Cola performs best when the LM is fine-tuned (called Cola-FT). Cola is also effective in zero-shot or few-shot context learning (called Cola-Zero). In addition to performance improvements, Cola is also more robust to VLM errors. We demonstrate that Cola can be applied to various VLMs (including large multimodal models like InstructBLIP) and 7 datasets (VQA v2, OK-VQA, A-OKVQA, e-SNLI-VE, VSR, CLEVR, GQA), and it consistently improves performance.

AI image detection and recognition

54.4K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

42.2K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.0K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.1K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

41.7K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.2K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.4K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M